In 2026, the best AI writing environment is less about raw model intelligence and more about workflow fit. ChatGPT with GPT-5.4 Canvas, Claude with Opus 4.7 Artifacts, Gemini, and GPT-5.5-style agentic systems each serve different writing, coding, research, and production needs.

Which AI writing environment is best overall in 2026?

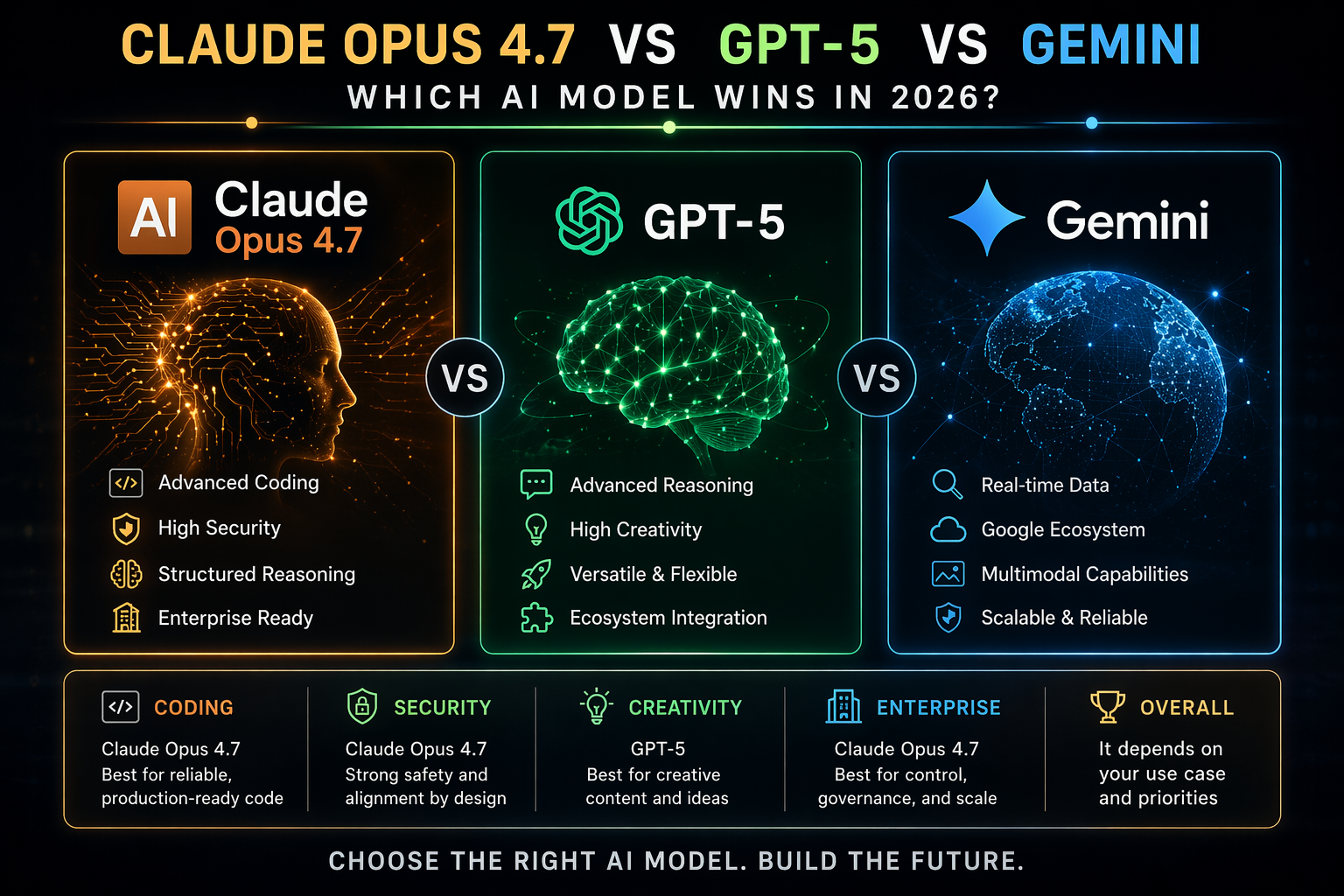

GPT-5.4 Canvas is the best overall AI writing environment for most writers because it is flexible, fast, and well suited to drafting, editing, rewriting, and content planning. Claude Opus 4.7 Artifacts is the better choice when the writing project depends on complex reasoning, code generation, structured documents, or visual-context analysis.

The answer is not a simple “Claude Opus 4.7 vs ChatGPT” winner. ChatGPT remains the more versatile mainstream writing workspace, while Claude often feels stronger when the document is technical, analytical, or tied to code and tools.

| Environment | Best For | Main Strength | Main Limitation |

|---|---|---|---|

| GPT-5.4 Canvas | General writing, editing, brainstorming | Fast drafting and broad workflow flexibility | Not always the top coding benchmark leader |

| Claude Opus 4.7 Artifacts | Technical writing, coding, structured reasoning | Strong coding, orchestration, and long-form coherence | Can feel less universal for quick content production |

| GPT-5.5 agentic workflows | Autonomous research, agents, tool use | Reportedly stronger agentic performance | May be overkill for ordinary writing |

| Gemini | Google-native research and multimodal work | Strong integration with search and media workflows | Writing experience depends heavily on ecosystem fit |

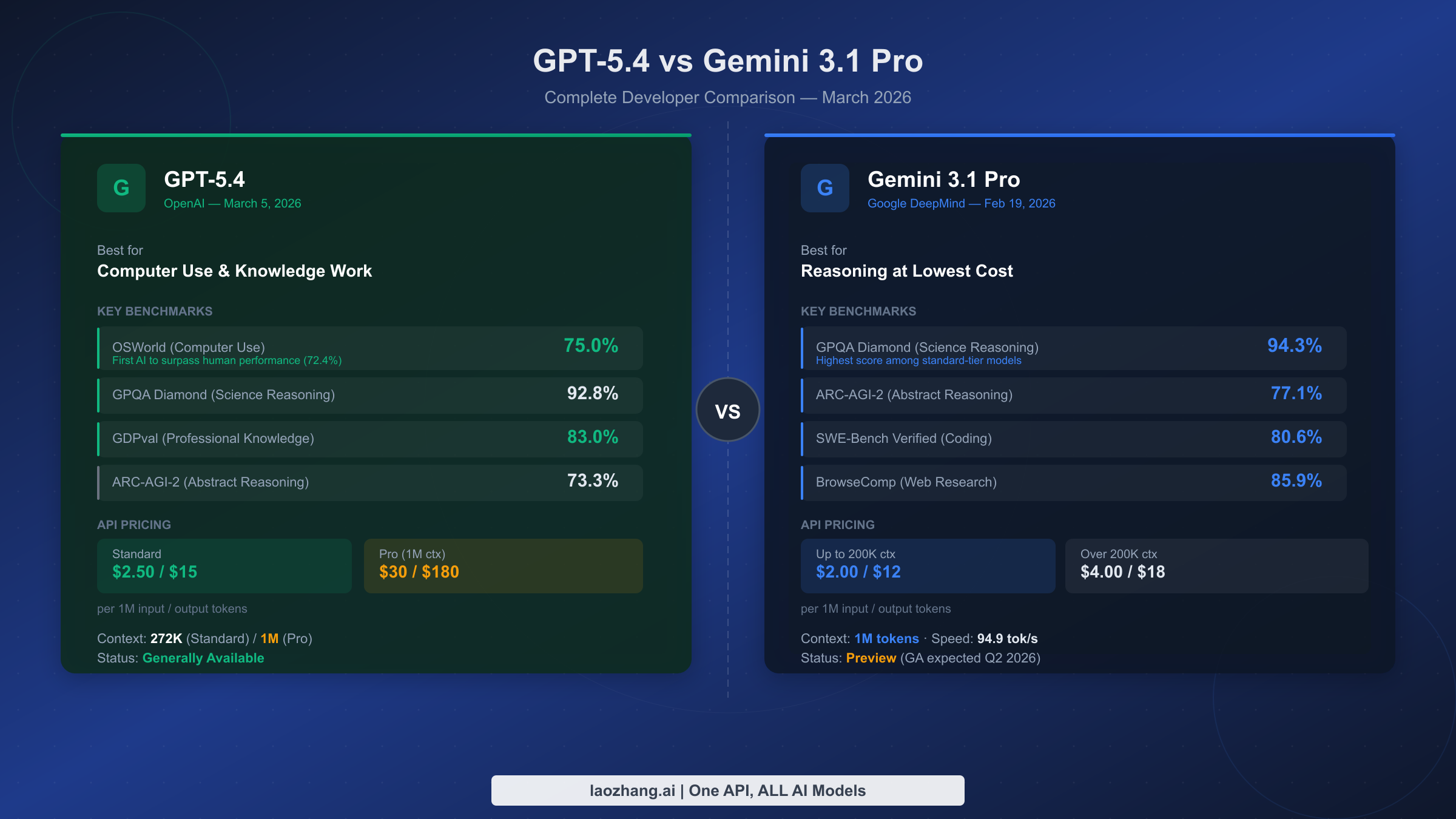

How do GPT-5.4 Canvas and Claude Opus 4.7 Artifacts compare on benchmarks?

In reported Opus 4.7 vs GPT-5.4 benchmark comparisons, Claude Opus 4.7 tends to lead in coding-focused and complex orchestration tasks. GPT-5.4 remains highly competitive as a general model and is often preferred for broad editorial workflows.

Benchmarks are useful, but they do not fully predict writing productivity. A model that wins a coding benchmark may not be the best tool for a novelist, marketer, editor, analyst, or SEO strategist.

The most useful benchmark categories for writers are reasoning quality, rewrite control, long-context reliability, instruction following, tool use, and formatting consistency. Claude Opus 4.7 is especially strong when the output must remain logically structured across long or technical documents.

Which is better for coding, Opus 4.7 or GPT-5.4?

Claude Opus 4.7 is generally the stronger choice for coding, with reported comparisons placing Opus ahead on coding benchmarks, including figures around 87.6% in some 2026 evaluations. GPT-5.4 is still excellent for code explanation, debugging support, and mixed writing-plus-code workflows.

For software documentation, API references, developer tutorials, and code-adjacent content, Claude Artifacts can be more convenient because it keeps generated code and structured outputs visible. It is particularly useful when the writing environment must also function as a lightweight development workspace.

- Choose Claude Opus 4.7 Artifacts for code generation, refactoring, technical specs, and interactive prototypes.

- Choose GPT-5.4 Canvas for developer education, explanatory drafts, tutorials, and mixed editorial work.

- Choose GPT-5.5-style agents when the coding task requires autonomous multi-step execution.

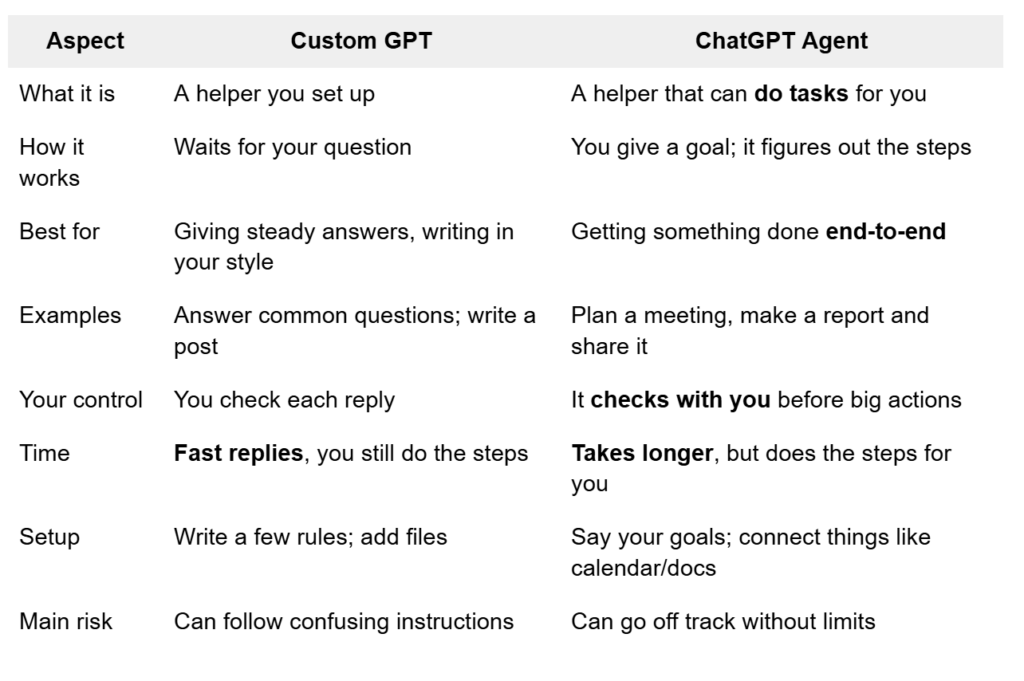

Which is better for agentic workflows, GPT-5.4 or Claude Opus 4.7?

Claude Opus 4.7 is strong at multi-tool orchestration, while GPT-5.5 is often reported as stronger for agentic performance than GPT-5.4 and Opus 4.7. GPT-5.4 Canvas is best when the human remains actively involved in planning, editing, and approving each step.

Agentic writing workflows include research collection, outline generation, source comparison, draft production, image interpretation, fact checking, and publishing preparation. Claude is attractive when the workflow involves structured artifacts, code, tables, and repeated tool calls.

For most writers, full autonomy is less important than controllability. GPT-5.4 Canvas is often the safer environment when tone, brand voice, and editorial judgment matter more than independent task execution.

How should writers choose between GPT-5.4 Canvas and Claude Opus 4.7 Artifacts?

Writers should choose GPT-5.4 Canvas for general content creation and Claude Opus 4.7 Artifacts for technical, analytical, or code-linked writing. The best choice depends on whether the primary job is prose production or structured problem solving.

- Use GPT-5.4 Canvas if you need fast outlines, drafts, rewrites, summaries, emails, scripts, SEO pages, or editorial variants.

- Use Claude Opus 4.7 Artifacts if you need technical documentation, code examples, data explanations, product specs, or complex reasoning.

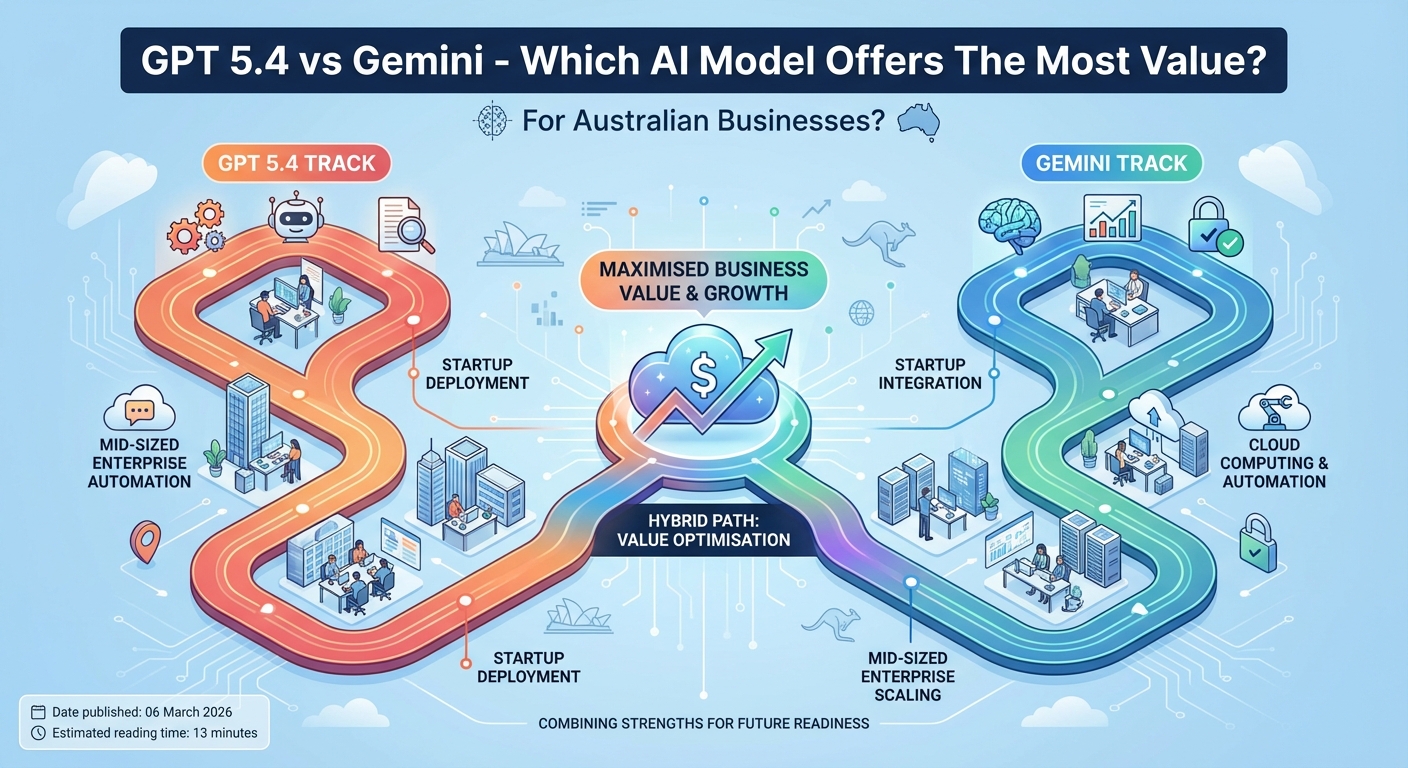

- Use Gemini if your workflow is heavily tied to Google tools, search-based research, or multimodal assets.

- Use an agentic GPT-5.5 workflow if you need autonomous research, planning, tool execution, and iterative completion.

For professional teams, the best setup is often not one model. Many teams draft in ChatGPT, validate technical sections in Claude, and use Gemini for ecosystem-specific research or multimodal support.

What do GPT-5.4 vs Opus 4.7 Reddit discussions usually emphasize?

GPT-5.4 vs Opus 4.7 Reddit discussions usually emphasize practical workflow differences more than benchmark tables. Users often describe GPT-5.4 as easier for everyday writing and Claude Opus 4.7 as better for coding, careful reasoning, and artifact-based outputs.

GPT-5.5 vs Opus 4.7 Reddit comparisons often focus on agentic behavior. The common pattern is that GPT-5.5 is discussed as stronger for autonomous agents, while Opus 4.7 is praised for coding quality, multi-step coherence, and tool-heavy tasks.

Reddit feedback is useful because it reflects real workflows, but it is not a controlled benchmark. Treat it as qualitative evidence, especially for usability, latency, pricing complaints, and long-session reliability.

How does Claude Opus 4.7 compare with Gemini?

Claude Opus 4.7 is usually the better choice for coding, structured writing, and long-form reasoning, while Gemini is strongest when the workflow benefits from Google ecosystem integration. For research-heavy and multimodal work, Gemini can be a serious alternative to both Claude and ChatGPT.

The “Claude Opus 4.7 vs Gemini” decision depends on context. Claude is more attractive for technical composition and artifacts, while Gemini is more attractive for users already working across Google Search, Workspace, video, images, and cloud-connected productivity tools.

What are the most common questions about GPT-5.4 Canvas and Claude Opus 4.7 Artifacts?

The most common questions focus on ChatGPT versus Claude, benchmark performance, coding ability, pricing, Reddit sentiment, and Gemini alternatives. The short answer is that GPT-5.4 Canvas is the safer default for writers, while Claude Opus 4.7 Artifacts is the specialist choice for technical and structured work.

Is Claude Opus 4.7 better than ChatGPT?

Claude Opus 4.7 is better than ChatGPT for many coding, reasoning, and artifact-based workflows. ChatGPT with GPT-5.4 Canvas is usually better for general writing speed, brainstorming, rewriting, and broad content production.

Is Opus 4.7 better than GPT-5.4 for coding?

Yes, Opus 4.7 is generally the stronger coding choice based on reported benchmark comparisons. GPT-5.4 remains highly capable for explanations, debugging support, and developer-focused writing.

Is GPT-5.5 better than Opus 4.7?

GPT-5.5 is often described as stronger for agentic workflows, while Opus 4.7 is stronger for coding and multi-tool structured tasks. The better model depends on whether you need autonomous execution or precise technical output.

Which platform is best for professional writers?

GPT-5.4 Canvas is the best default for professional writers who need speed, flexibility, and editorial control. Claude Opus 4.7 Artifacts is better for writers producing technical documentation, software content, analytical reports, or structured deliverables.

![Claude vs. ChatGPT: Which is best? [2026]](https://images.ctfassets.net/lzny33ho1g45/4vz596IkendsVTaLDpQ3c/34169ba2f6a348041d630cff198903ce/chatgpt-vs-claude.jpg?fm=jpg&q=31&fit=thumb&w=1520&h=760)