Autonomous agents don’t fail because they “aren’t smart enough” most of the time. They fail because one model loses the thread after three tool calls, over-plans the wrong thing, or confidently breaks a workflow that needed patience more than flash.

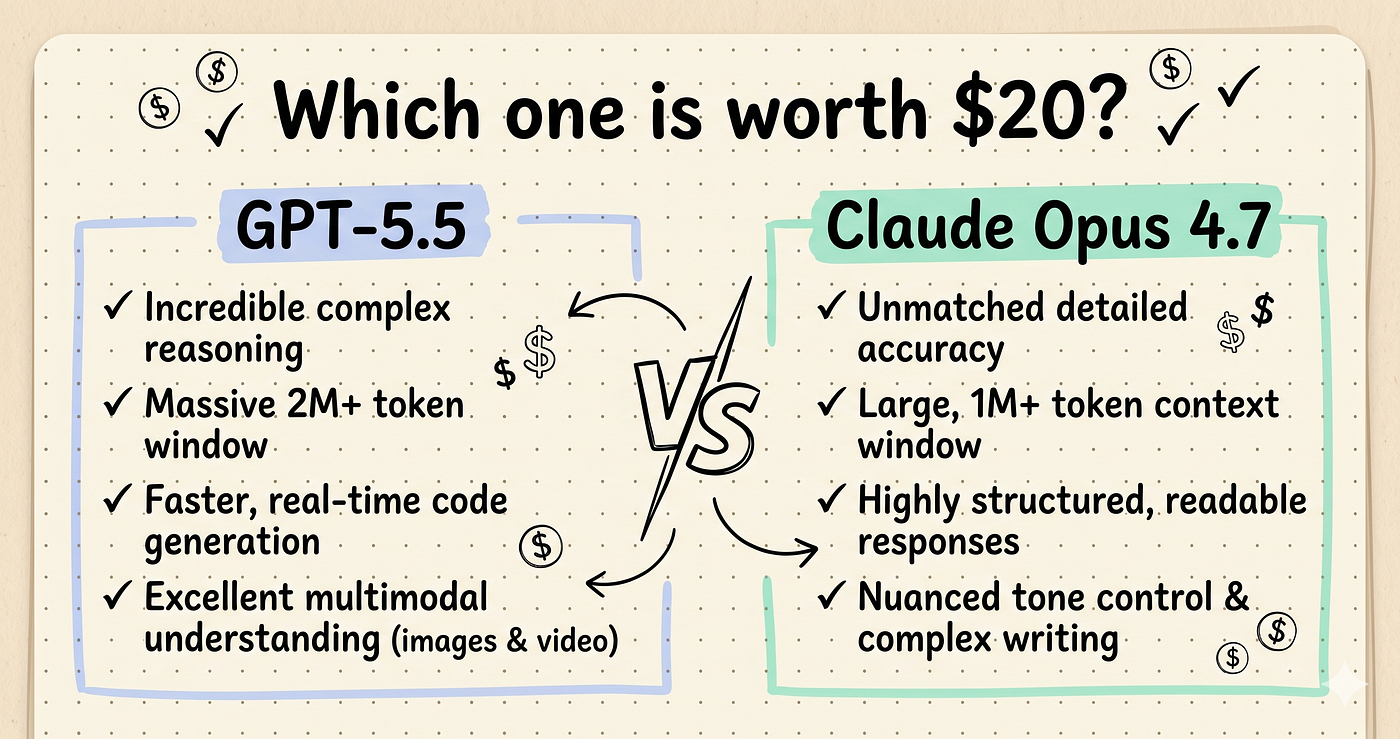

If you’re choosing between GPT-5.5 Thinking and Claude 4.7 Opus, the real question is not “Which model is smarter?” It’s: which one can reliably plan, use tools, recover from mistakes, and finish work without you babysitting it?

GPT-5.5 Thinking vs Claude 4.7 Opus: The Short Version

Both models are elite. If you are building agents that browse, code, call APIs, edit files, use MCP servers, manage documents, and make decisions across several steps, both can perform at a very high level.

But the difference shows up under pressure. GPT-5.5 Thinking tends to front-load its answer: it gives a dense, ambitious first response with a lot packed in. That is great when you want a big plan, a strategy, or a complete first draft.

Claude 4.7 Opus is usually more structured, more conversationally stable, and better at follow-ups. It often feels less like it is trying to win the first response and more like it is trying to complete the entire mission.

| Category | GPT-5.5 Thinking | Claude 4.7 Opus | Winner |

|---|---|---|---|

| Multi-tool workflows | Excellent, but can over-compress plans | More reliable orchestration and recovery | Claude 4.7 Opus |

| Reasoning depth | Very strong, dense first pass | Strong, clearer step-by-step structure | Tie, slight edge by use case |

| Speed | Often feels faster for first responses | Can be more deliberate | GPT-5.5 Thinking |

| Writing | Bold, concise, high-density drafts | Natural, organized, easier to refine | Claude 4.7 Opus |

| Coding agents | Strong implementation speed | Better long-context debugging and tool discipline | Claude 4.7 Opus |

| Cost efficiency | Potentially efficient if fewer tokens solve the job | Potentially efficient if fewer workflow failures occur | Depends on workload |

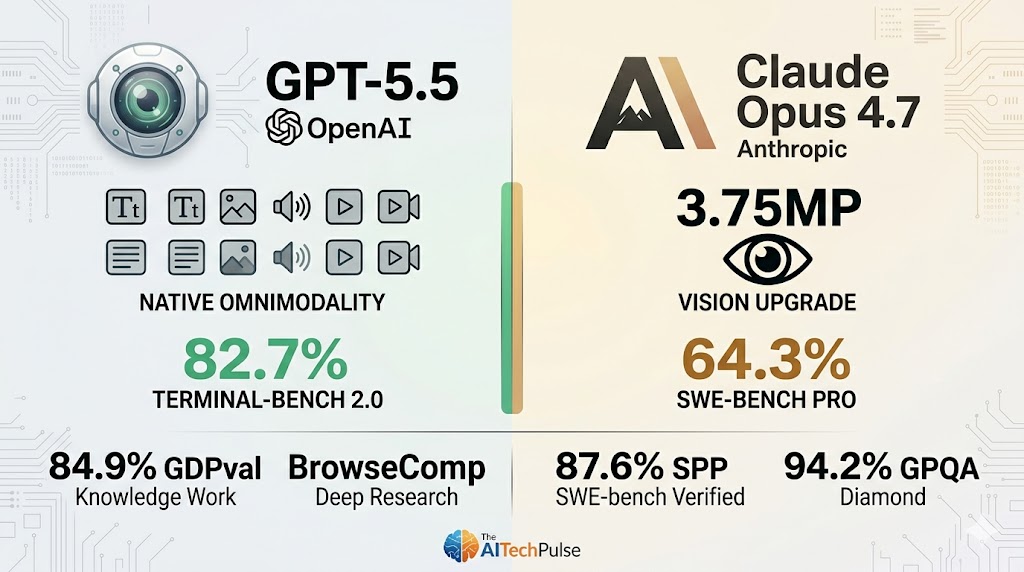

Benchmark Comparison: What the Numbers Actually Mean

Benchmarks are useful, but only if you understand what they are testing. For autonomous agent workflows, the most important benchmarks are not just trivia, math, or coding puzzles. You want benchmarks that measure tool use, operating system tasks, planning, recovery, and multi-step execution.

MCP-Atlas: Claude 4.7 Opus Has the Edge

On MCP-Atlas, which measures multi-tool workflow orchestration, Claude 4.7 Opus leads with 77.3% versus GPT-5.5 Thinking at 75.3%.

A two-point gap may sound small, but for autonomous agents, it matters. MCP-style workflows are where agents need to:

- Choose the right tool at the right time

- Track previous tool outputs

- Avoid repeating failed calls

- Chain actions without losing the original goal

- Recover when a tool result is incomplete or unexpected

That is exactly where Claude 4.7 Opus feels more dependable. It is not just answering; it is managing the workflow.

OSWorld: The 5.7-Point Gap Is a Big Deal

On OSWorld, which evaluates real computer-use tasks, GPT-5.5 Thinking reportedly scores 58.6%, with Claude 4.7 Opus ahead by 5.7 points. That puts Claude around 64.3% in this comparison.

In practice, that gap is meaningful. OS-style tasks are messy. The model has to read screens, understand state, decide what to click or type, and continue after small mistakes. A 5.7-point advantage can mean the difference between an agent that completes a workflow and one that gets stuck halfway through a settings page.

For teams building browser agents, desktop agents, QA automation agents, or research assistants, Claude’s advantage here is one of the strongest arguments in its favor.

Reasoning: Dense Intelligence vs Structured Persistence

GPT-5.5 Thinking is impressive when you ask for a complex answer upfront. It often gives you a highly compressed response with strategy, tradeoffs, edge cases, and implementation notes all in one pass.

That makes it excellent for:

- Initial architecture planning

- Brainstorming agent designs

- Generating dense technical specs

- Evaluating multiple options quickly

- Creating first-draft code or documentation

Claude 4.7 Opus, however, tends to win when the task needs ongoing reasoning over many turns. It is usually better at keeping a clean structure, asking useful clarifying questions, and maintaining the intent of the workflow.

If GPT-5.5 feels like a brilliant consultant dropping a complete memo on your desk, Claude 4.7 Opus feels like a senior operator sitting beside you until the job is done.

Cost: Don’t Just Compare Token Prices

When people search for GPT 5.5 vs Opus 4.7 pricing, they often want a simple winner. But for agentic work, the cheapest model on a price sheet is not always the cheapest model in production.

You need to think in terms of effective task cost, not just input and output token cost.

Ask these questions:

- How many attempts does the model need to finish the task?

- How often does a human need to intervene?

- Does the model make expensive tool calls unnecessarily?

- Does it produce bloated outputs that increase token usage?

- Does it fail late in the workflow, after already spending money?

GPT-5.5 Thinking can be cost-effective when its dense first answer reduces back-and-forth. Claude 4.7 Opus can be cost-effective when its stronger workflow discipline prevents retries, failed automations, and manual cleanup.

For production agents, Claude may cost more per interaction but less per completed task if your workflows are complex. For simpler tasks, GPT-5.5 Thinking may give you better throughput.

Speed: GPT-5.5 Feels Snappier, Claude Feels Safer

If you care about first-response speed, GPT-5.5 Thinking often feels faster and more aggressive. It gets to the point quickly and can produce a lot of usable material without needing much prompting.

Claude 4.7 Opus can feel more measured. That is not always a downside. In autonomous workflows, speed is only valuable if the model stays correct. A fast model that makes the wrong tool call can waste more time than a slower model that checks context before acting.

Use GPT-5.5 when you want quick analysis, fast drafting, or rapid option generation. Use Claude when the workflow has more moving parts and mistakes are expensive.

Writing: Which One Is Better?

For people searching GPT 5.5 vs Opus 4.7 for writing, the answer depends on the kind of writing.

GPT-5.5 Thinking is strong for punchy, information-dense content. It is good at frameworks, outlines, direct explanations, and polished first drafts.

Claude 4.7 Opus is often better for writing that needs flow, tone control, nuance, and iterative editing. It is especially strong when you say, “Keep the same argument, but make it warmer,” or “Rewrite this for a technical buyer without sounding robotic.”

For long-form writing workflows, editorial agents, research synthesis, and brand voice refinement, I would choose Claude 4.7 Opus [AMAZON_LINK].

Coding Agents: Opus 4.7 vs GPT-5.5

For coding, both are excellent. GPT-5.5 Thinking can produce strong implementations quickly, especially when the task is well-scoped. It is the model I would consider when I need a fast prototype, a refactor plan, or a direct answer about architecture.

Claude 4.7 Opus has the advantage in more agentic coding scenarios:

- Editing multiple files

- Following repository conventions

- Debugging from logs and test failures

- Keeping track of long context

- Using tools without derailing the task

If you are building a coding agent that needs to run tests, inspect files, patch code, and continue after errors, Claude 4.7 Opus is the safer default [AMAZON_LINK]. If you are using a human-in-the-loop coding workflow, GPT-5.5 Thinking remains extremely competitive.

Reddit-Style Take: What Users Will Probably Notice

If you look at the kind of comments people make in GPT-5.5 vs Opus 4.7 Reddit discussions, the split usually sounds like this:

- GPT-5.5 fans: “It gives me more upfront. It feels sharper. It gets the idea faster.”

- Claude fans: “It follows instructions better. It is easier to steer. It does not lose the plot.”

That matches the real-world pattern. GPT-5.5 Thinking impresses quickly. Claude 4.7 Opus builds trust over longer sessions.

Which Should You Pick for Autonomous Agent Workflows?

Choose GPT-5.5 Thinking if you need:

- Fast, dense first responses

- Strong general reasoning

- Rapid prototyping

- Concise technical planning

- Lower-latency idea generation

Choose Claude 4.7 Opus if you need:

- Reliable multi-tool orchestration

- Better long-context follow-ups

- Stronger computer-use performance

- Cleaner writing and editing workflows

- More dependable autonomous agents

For most serious agentic systems, my recommendation is simple: use Claude 4.7 Opus as your primary autonomous workflow model [AMAZON_LINK], and keep GPT-5.5 Thinking [AMAZON_LINK] available for fast planning, synthesis, and high-density reasoning tasks.

FAQ

Is Claude 4.7 Opus better than GPT-5.5 Thinking?

For autonomous agent workflows, yes, Claude 4.7 Opus has the edge, especially in multi-tool orchestration and computer-use tasks. GPT-5.5 Thinking is still excellent for fast reasoning and dense first-pass answers.

Which is better for coding: Opus 4.7 or GPT-5.5?

GPT-5.5 is great for fast code generation and architecture ideas. Claude 4.7 Opus is better for coding agents that need to inspect files, use tools, run tests, and debug across multiple steps.

Which model is cheaper?

It depends on current API pricing and the task. For agents, compare cost per completed workflow, not just token pricing. A model that fails less often can be cheaper even if each call costs more.

Which is better for writing?

Claude 4.7 Opus is usually better for long-form writing, editing, tone, and follow-up revisions. GPT-5.5 Thinking is better when you want a dense, sharp first draft or strategic outline.

Should I use both GPT-5.5 and Claude 4.7 Opus?

Yes, if your budget allows it. Use Claude 4.7 Opus for the main agent loop and GPT-5.5 Thinking for planning, summarizing, brainstorming, and quick technical analysis.

Final recommendation: If you are building autonomous agents that need to complete real workflows with minimal supervision, pick Claude 4.7 Opus. If your priority is fast, dense reasoning and strong first drafts, keep GPT-5.5 Thinking in your stack as a powerful secondary model.